Best practices for mapping learning outcomes across didactic and clinical courses in pharmacy education

In pharmacy education, mapping learning outcomes is no longer a documentation exercise you complete once and file away. Under ACPE Standards 2025, programs are expected to demonstrate that didactic and clinical course alignment is intentional, assessed, and continuously improved, using evidence that demonstrates alignment from program learning outcomes mapping to course activities, assessment data, and experiential performance.

Recent licensure reporting reinforces why this matters. NABP’s reporting for NAPLEX outcomes reinforces two realities that assessment leaders already feel: cohort outcomes vary by program, and program-level performance must be interpreted and acted on, not just archived in annual reports.

The challenge is that alignment work spans multiple “languages” at once:

- Program learning outcomes mapping (program outcomes, COEPA, where used, ACPE key elements)

- Course-level outcomes (syllabi, objectives, skill checklists)

- Assessment evidence (exam blueprinting, item performance, reliability, remediation)

- Experiential evidence (IPPE/APPE evaluations, entrustment, preceptor feedback)

- External indicators (NAPLEX/MPJE and other state-specific licensure indicators, AACP surveys, benchmarks)

Programs that do this well build an integrated assessment infrastructure supported by a curriculum mapping platform for pharmacy schools and reinforced by pharmacy programmatic assessment routines. This guide outlines best practices for teams who already know the fundamentals and want a workflow that is audit-ready, faculty-usable, and accreditation-relevant.

The practical constraint in pharmacy education right now

Across pharmacy education, programs are navigating more than ACPE Standards 2025 expectations. Many schools are managing enrollment pressure, public transparency of licensure outcomes, and faculty capacity constraints. In this environment, pharmacy curriculum mapping cannot become another parallel reporting system built on manual spreadsheets and disconnected reports.

If mapping learning outcomes increases workload without improving clarity, faculty disengage, and even well-designed systems become unsustainable. Simplification, therefore, is not about reducing rigor. It is about reducing fragmentation. When didactic and clinical course alignment, assessment tagging, experiential evaluations, licensure benchmarks, and CQI documentation live in separate systems, faculty spend more time reconciling reports than improving curriculum.

A unified curriculum mapping platform for pharmacy schools supports data-driven programmatic assessment in pharmacy education by connecting program learning outcomes mapping, assessment evidence, experiential performance, and accreditation documentation in one evidence structure — so accreditation readiness becomes part of daily workflow rather than a separate project.

Best practice 1: Establish one outcomes “spine” that works in both didactic and experiential settings

The most common reason maps fail in practice is misaligned taxonomy. If didactic faculty map to one set of outcomes while experiential teams map to another, the program ends up with parallel systems that do not reconcile during committee review or accreditation documentation.

A durable outcomes spine typically includes:

- Program outcomes (your institutional/program outcomes)

- ACPE-aligned outcomes language (Standards 2025 expectations reflected in your internal structure)

- Curricular threads that intentionally span didactic + experiential learning (e.g., patient safety, calculations, law/jurisprudence, clinical reasoning, interprofessional skills)

This is the foundation for credible pharmacy curriculum mapping because everything else, assessment evidence, experiential evaluations, remediation, and CQI, must be traceable to a stable set of program outcomes.

What faculty do in practice:

- Use CompetencyGenie™ (AI-powered tagging tool) to standardize how items and assessments are classified to your outcomes framework so faculty aren’t reinventing mapping logic course by course.

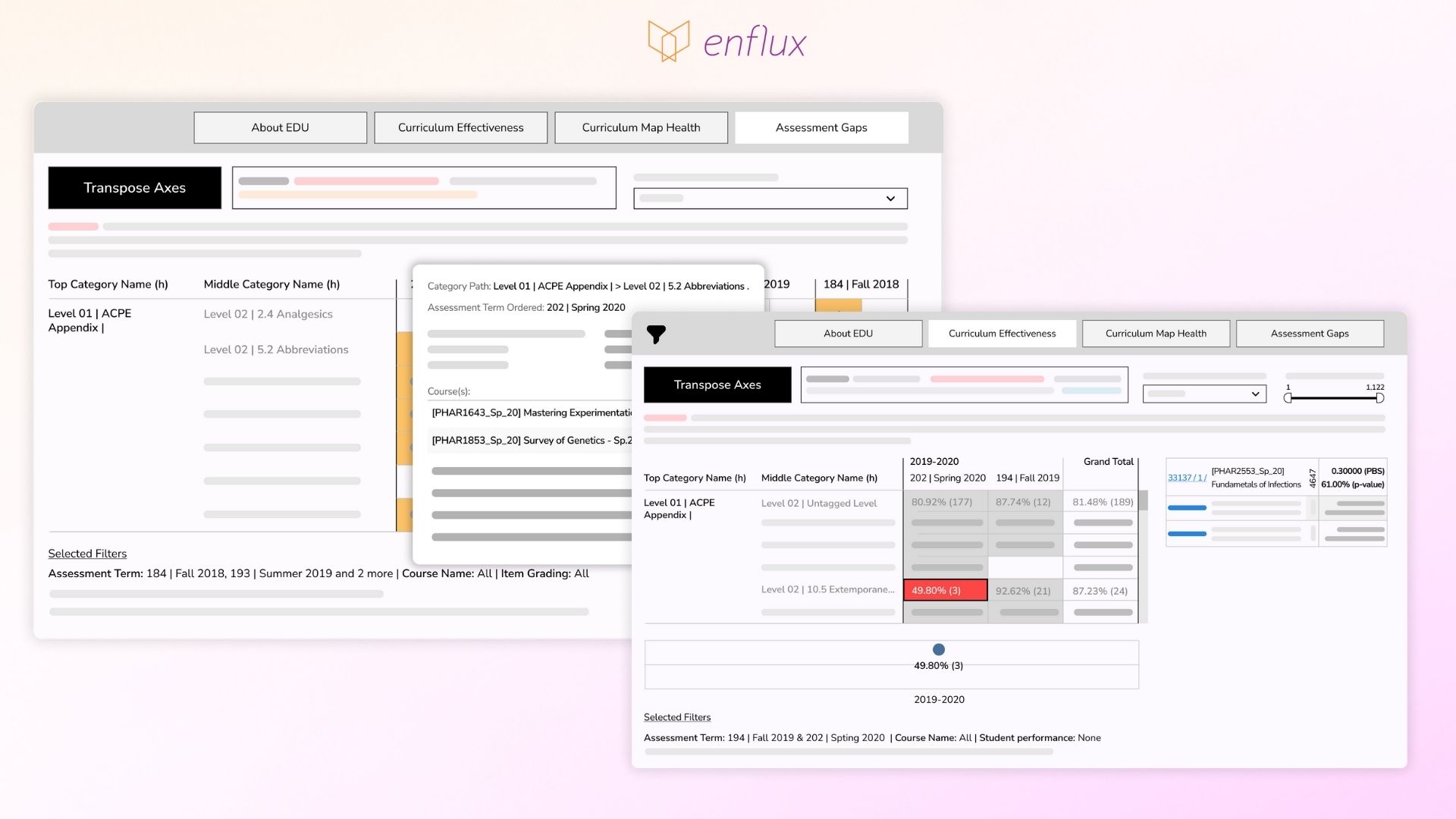

- Use the Curriculum Effectiveness and Gap Analysis dashboard to validate that outcomes on the curriculum map are actually being assessed and automatically flag outcomes included in the map but not assessed within your assessment blueprinting structure (e.g., ExamSoft categories).

On-demand webinar:

Streamline Exam Tagging with AI: Aligning with ACPE Standards 2025

Best practice 2: Map where learning is measured, not just where it is mentioned

Programs often “map the syllabus,” then wonder why the map doesn’t help decision-making. A syllabus-level map can prove intent, but it rarely proves measurement.

High-utility mapping connects outcomes to:

- Assessments (exam blueprints, OSCEs, skills checks)

- Item-level evidence (psychometrics, performance by outcome)

- Experiential evaluations (IPPE/APPE performance, preceptor ratings, entrustment decisions)

- Longitudinal indicators (progression trends, remediation outcomes)

When outcomes performance is connected to assessment evidence, programs can identify which outcomes are driving underperformance early, target support faster, and document the interventions for struggling students, rather than waiting for course failure or end-of-year remediation.

What faculty do in practice:

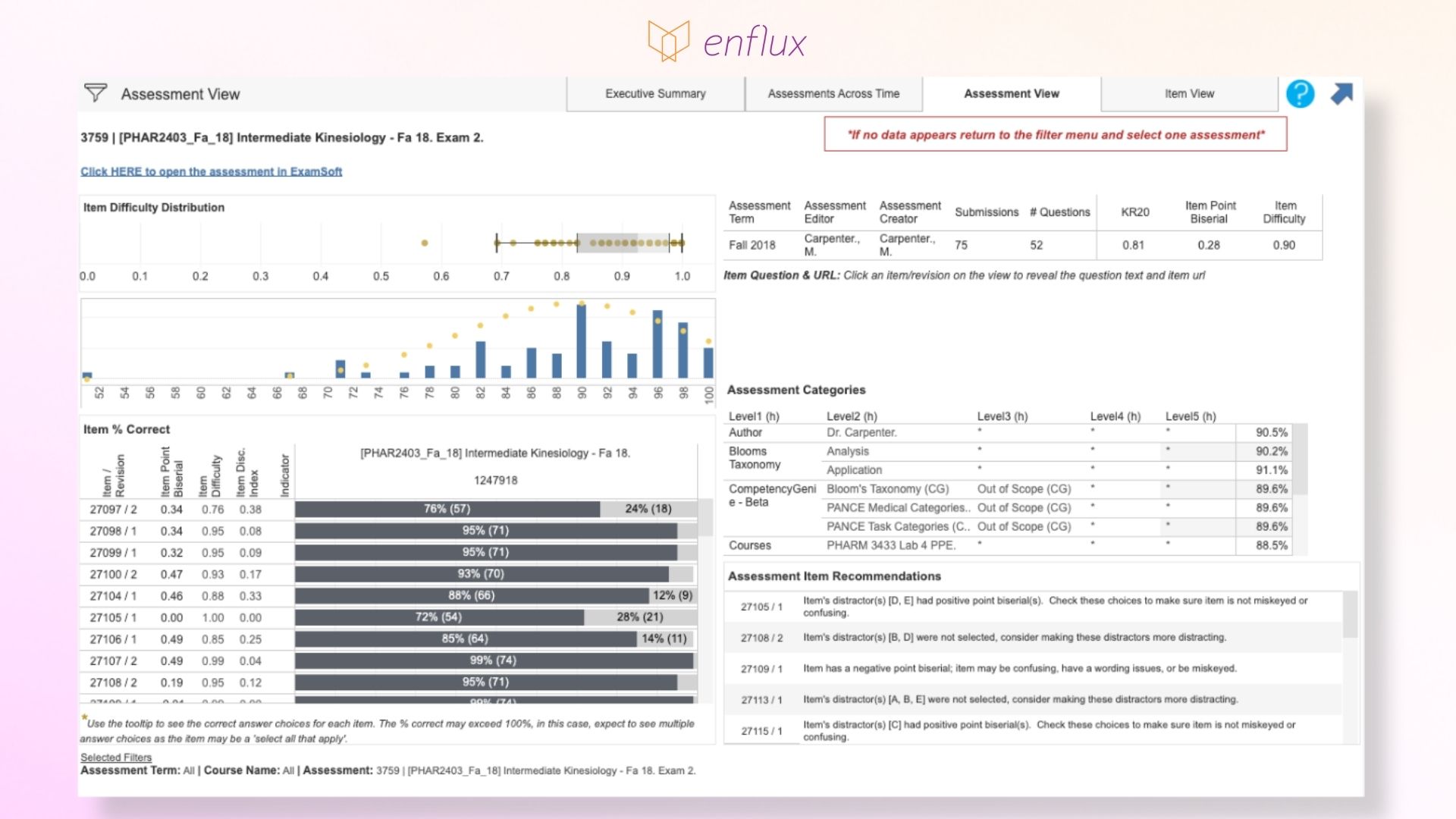

- Use the Assessment & Item Effectiveness dashboard to connect assessment performance back to tagged outcomes/competencies and review key item/assessment indicators (e.g., reliability and quality signals) so your mapping of learning outcomes is backed by measurement evidence.

Assessment and Item Effectiveness Dashboard. Assessment view

Best practice 3: Treat didactic–experiential alignment as a longitudinal design problem, not a handoff

Didactic and clinical course alignment breaks down when experiential expectations assume competencies were mastered didactically, but the data shows inconsistent reinforcement or insufficient assessment depth.

Programs that map well across settings do two things:

- Define where key competencies are introduced, reinforced, and validated before advanced practice experiences

- Monitor whether performance improves as students move through the sequence (not just whether content appears “somewhere”)

This is the practical core of pharmacy programmatic assessment: a student’s performance trajectory should match the program’s intended developmental pathway.

What faculty do in practice:

Use the Curriculum Effectiveness & Gap Analysis Dashboard to review performance longitudinally across multiple courses and years on ExamSoft categories, especially for outcomes that should strengthen before APPE readiness decisions.

Curriculum Effectiveness & Gap Analysis Dashboard Overview

Best practice 4: Make mapping of learning outcomes usable at the committee level

Even strong maps fail if they cannot be used in routine governance:

- Curriculum committee decisions need defensible evidence across courses and cohorts

- Assessment committees need clear patterns tied to outcomes, not isolated exam reports

- Experiential committees need outcome-linked trends (not only site-level anecdotes)

The “best” map is the one that supports the decisions your program actually makes, especially under accreditation pressure.

What faculty do in practice:

- Use ActionPlan® Management System to document data-informed curriculum decisions and loop-closure: capture the exact data view, assign owners, define milestones, and track completion over time.

- Use ActionPlan® reports to bundle multiple actions into documentation for committee review and self-study compilation.

Best practice 5: Triangulate mapping evidence with external outcomes (without overreacting to a single metric)

Accreditors (and internal stakeholders) increasingly expect triangulation, showing how multiple data sources align:

- Internal outcomes performance (course + program levels)

- Assessment quality and blueprinting evidence

- Experiential performance indicators

- Licensure outcomes (NAPLEX/MPJE/CPJE-style signals)

- Survey data (AACP instruments, preceptor/alumni feedback)

Licensure outcomes, for example, are high-salience signals with visible variability across schools. Mapping of learning outcomes becomes the interpretive infrastructure: it helps you connect external performance patterns to internal curricular decisions.

What faculty do in practice:

- Use the NAPLEX® dashboard and MPJE® dashboard to compare performance to state and national benchmarks and contextualize where outcomes may reflect curricular gaps versus cohort effects.

- Use the AACP® Program Quality Surveys dashboard to benchmark survey results (students, preceptors, alumni, faculty) and connect perception/experience trends back to mapped outcomes and CQI priorities.

Case Study

Discover how Florida A&M University College of Pharmacy improved exam tagging and boosted NAPLEX pass rates using a learning analytics platform

Mapping of learning outcomes that reduces risk, improves readiness, and sustains CQI

Outcomes mapping becomes strategically valuable when it does three things at once:

- Clarifies where learning is developed across didactic and clinical settings,

- Connects outcomes to defensible evidence (assessment + experiential),

- Supports loop closure that is visible, repeatable, and easy to retrieve.

Programs that treat mapping as a living system also gain a practical advantage. They can identify at-risk students earlier, target intervention strategies to specific outcomes, and document improvement actions continuously, so accreditation readiness is the byproduct of daily practice, not an emergency project.

If you’re preparing for ACPE Standards 2025 and want outcomes mapping that faculty can actually use, Enflux can help you operationalize a single evidence system across outcomes mapping, assessment quality, student performance, licensure benchmarks, and CQI documentation.

FAQs

Start by building one outcomes “spine” that both didactic and experiential teams agree to use (program outcomes + ACPE-aligned language + key curricular threads). Then keep the mapping consistent by using a single system of record rather than course-owned spreadsheets.

The fastest approach is to look for outcomes that are mapped but not assessed, outcomes that are over-assessed in one part of the curriculum and missing in another, and outcomes that appear repeatedly without a clear progression from foundational to applied to practice-based performance.

You prove alignment by showing traceability from program outcomes to course activities to assessment evidence and experiential performance, then documenting what actions were taken when gaps were identified. In Enflux, this is done by pairing curriculum mapping views with evidence dashboards and then documenting “loop closure” in ActionPlans® Management System, where programs capture the data view that triggered the decision, assign responsibility, track completion, and record follow-up evidence for accreditation review.

The credibility comes from using AI to standardize and accelerate tagging, while faculty still control the taxonomy, review exceptions, and validate results through dashboard-based auditing. Programs use AI tools like CompetencyGenie™ to reduce manual classification effort and improve consistency across courses, which makes downstream curriculum mapping and accreditation reporting far more reliable.

Early intervention requires visibility into which outcomes are driving underperformance before failure patterns become entrenched. Early alert systems and platforms allow programs to identify at-risk students early in the semester and see which competencies they struggle with most consistently, enabling targeted support rather than generic remediation. When those outcome-level patterns are connected back to the curriculum map, interventions become both student-centered and accreditation-relevant.

Accreditors want to see the chain from evidence to decision to action to follow-up. Instead of writing narrative summaries after the fact, programs can document loop closure continuously by capturing the supporting data view, recording the change, assigning an owner, tracking completion, and saving the follow-up review results.

Committees should prioritize outcomes that are high-stakes for readiness (clinical reasoning, calculations, patient safety, law/jurisprudence), outcomes with inconsistent evidence between didactic and experiential settings, and outcomes tied to repeated underperformance patterns. Enflux supports this prioritization by making it easy to compare outcome performance across courses and cohorts, integrate licensure benchmarking through NAPLEX® and MPJE® dashboards, and document the resulting CQI actions through ActionPlan® reports for accreditation readiness.

Ready to make learning outcomes mapping audit-ready and faculty-usable?

See how Enflux connects didactic and clinical course alignment to real evidence, so your program can act on outcomes data, support early intervention, and stay continuously prepared for ACPE Standards 2025.