How triangulating course evaluation exam and outcomes data strengthens program decision making

Most academic programs are not lacking data. Course evaluations reside in one system, assessment results in another, and outcomes evidence is often maintained separately through accreditation, curriculum, student data collection methods, or assessment workflows. Individually, each source provides useful information. Collectively, however, disconnected systems make it difficult to interpret performance patterns in a meaningful and timely way.

That fragmentation has a cost. When learner feedback, assessment performance, curriculum mapping, and outcomes data are reviewed separately, programs risk making decisions based on incomplete evidence. Important relationships between student performance, curricular design, and competency development become harder to identify, and opportunities for earlier intervention are often missed.

Deloitte’s 2026 higher education trends report keeps surfacing this as a strategic risk. This challenge is becoming more pronounced as accreditation expectations, institutional accountability pressures, and the role of AI in higher education continue to evolve. Increasingly, programs are moving away from fragmented reporting models toward the triangulation of data in higher education through higher education analytics platform that connect evaluation, assessment, and outcomes evidence into a single view.

Why disconnected reporting persists in most programs

Most programs already understand the value of triangulating evidence across multiple sources. The challenge is operational. In many institutions, this work still depends on manual reconciliation across spreadsheets, exported reports, and disconnected systems maintained by different stakeholders.

That approach was manageable when reporting cycles were slower and accreditation expectations were narrower. It is increasingly difficult to sustain within modern program evaluation environments, where institutions are expected to demonstrate continuous improvement, competency alignment, and defensible decision-making supported by multiple forms of evidence.

In practice, the challenges learning analytics in higher education needs to resolve in 2026 are too complex for manual reconciliation:

- learner feedback, assessment performance, and outcomes evidence remain separated across systems

- findings from one source are interpreted without sufficient context from others

- curricular decisions rely heavily on course grades rather than competency-level analysis

- quality improvement actions are implemented but not consistently documented

Connecting curriculum mapping and assessment evidence

Effective triangulation depends on visibility across the curriculum, assessments, competencies, and outcomes students are expected to achieve.

Many programs already collect detailed assessment data at the item level. The challenge is analyzing student assessment data in relation to the curriculum map in a way that supports meaningful interpretation. Without that connection, a low-performing assessment result may reflect several different issues: gaps in student learning, weaknesses in assessment design, or insufficient competency development within the curriculum itself.

This is where curriculum mapping in higher education becomes operational rather than purely administrative. When assessment outcomes are connected directly to courses, competencies, and longitudinal performance trends, programs can evaluate whether competencies are being meaningfully developed across the curriculum, not simply documented within it.

Programs that connect these data sources move beyond debating which signal is “correct” and toward understanding how multiple signals collectively describe program performance.

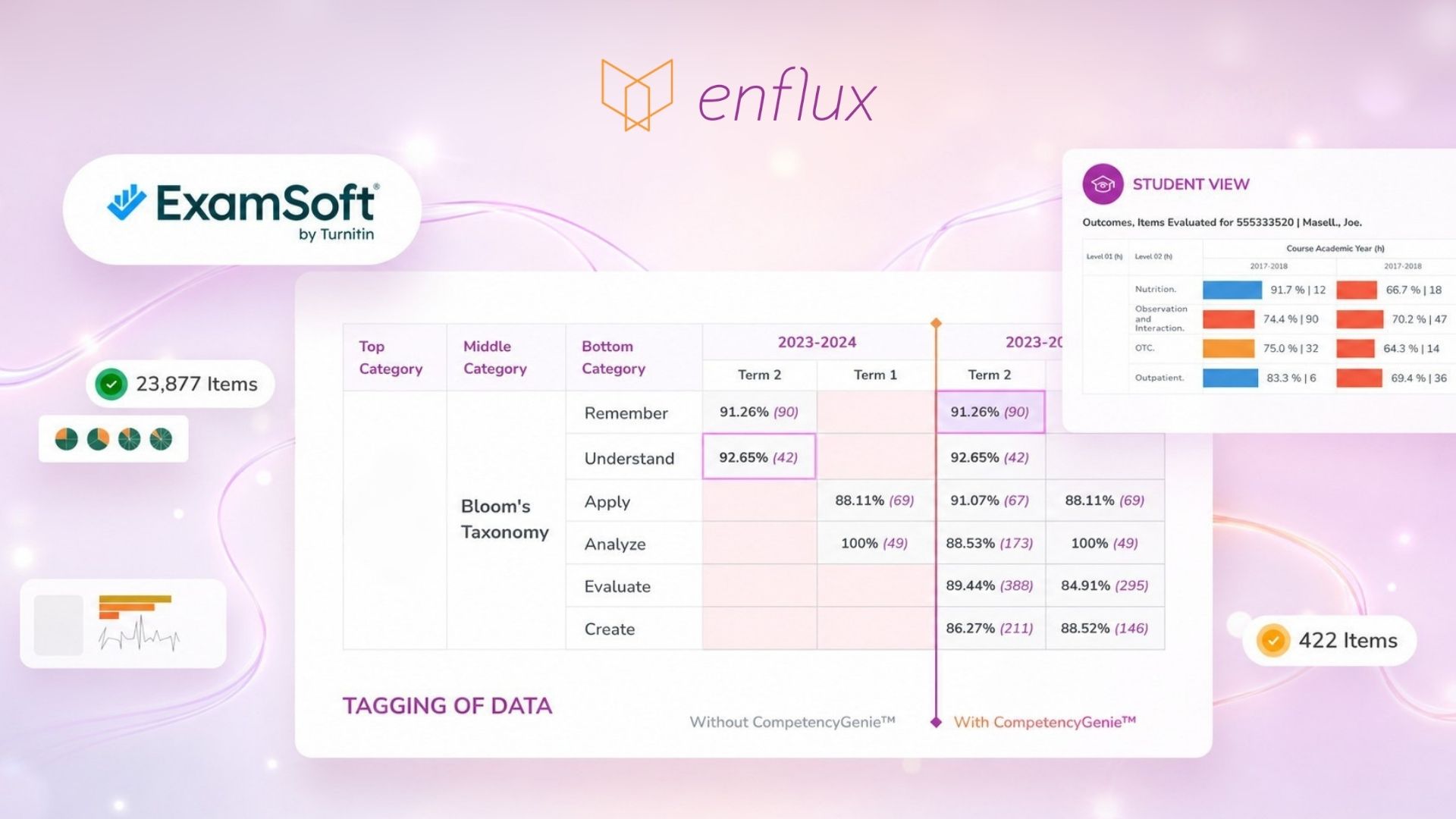

That connection depends on consistent tagging across courses and cohorts. Tools such as CompetencyGenie™ support this process by standardizing how assessment items are tagged to competencies, standards, and outcomes frameworks. The value is not automation alone, but creating a more reliable and scalable connection between what students experience and perform on with what programs are expected to demonstrate under their accrediting body’s standards.

Documenting decisions as part of continuous quality improvement (CQI)

Triangulated evidence only becomes valuable when it informs documented action.

Many programs already make thoughtful curricular and assessment adjustments based on evaluation findings. The difficulty is sustaining a consistent process for documenting how evidence informed decisions, who was responsible for implementation, and whether interventions produced measurable improvement over time. Unless those decisions are recorded in a shared process, it becomes difficult to answer the questions accreditors increasingly ask:

- What evidence prompted the decision?

- What action was taken in response?

- Who is responsible for follow-up?

- Did the change produce the intended outcome?

Moreover, accreditors expect programs to demonstrate not only that data was collected, but that findings were analyzed, acted upon, and revisited through structured continuous quality improvement processes.

The ActionPlans® Management System by Enflux supports this workflow by helping programs connect triangulated evidence directly to documented improvement initiatives. Programs can capture the data views that informed decisions, assign responsibility, define milestones, and monitor progress longitudinally.

As a result, self-studies become less focused on retrospective reconstruction and more reflective of an ongoing, operationalized process of program evaluation and improvement.

That shift is what programs in health profession education are building toward as they turn assessment data into a forward-looking strategy.

From fragmented reporting to triangulated evidence

For institutions working to strengthen programmatic assessment, the advantage is not simply access to more data. It is the ability to connect learner feedback, assessment performance, curriculum alignment, and outcomes evidence into a coherent framework for decision-making.

Enflux supports this process by bringing course evaluations, curriculum maps, assessment data, and outcomes evidence into a unified higher education analytics platform designed to support learning analytics in higher education. Through integrated analytics, AI-supported tagging with CompetencyGenie™, and ActionPlans® workflows, programs can move from fragmented reporting toward a more sustainable and evidence-informed approach to continuous improvement.

The result is less faculty time spent reconciling data and more time focused on interpreting evidence, evaluating curricular effectiveness, and supporting accreditation readiness as an ongoing institutional process rather than a periodic reporting exercise.

Ready to connect your program's data?

Explore how Enflux brings course evaluation, exam, and outcomes data into one connected view.