How PA programs can build a sustainable self-assessment system for ARC-PA accreditation and C1.01 reporting

Preparing for ARC-PA accreditation, particularly under Standard C1.01 and the self-study process, rarely reveals a lack of data. Most PA programs are data-rich. The challenge is what it takes to make that data usable in a way that demonstrates continuous, program-level self-assessment and defensible program effectiveness.

The data is everywhere. Student performance lives in exam and learning systems. Evaluations sit in survey tools. Clinical feedback comes from experiential platforms. Remediation is often tracked in spreadsheets. PANCE® results arrive separately, and other pieces come from dashboards or registrar reports.

Individually, each of these sources serves its purpose. Collectively, they create a familiar burden: before any meaningful analysis can begin, faculty and staff must locate, extract, reconcile, and standardize data across systems that were never designed to work together.

That burden becomes most visible during preparation for the ARC-PA self-study report (SSR), but it is not limited to that moment.

Operational demands of ARC-PA Standard C1.01 in PA program accreditation

Under the ARC-PA 6th edition Standards, programs are expected to demonstrate continuous compliance through ongoing, program-level self-assessment. In practice, that means the work of collecting, cleaning, organizing, and analyzing data is not episodic; it is constant.

For many programs, this work is distributed across a small group of faculty or staff, often layered on top of teaching, clinical coordination, and administrative responsibilities. Time that could be spent interpreting trends or improving the curriculum is instead spent reconciling datasets, validating accuracy, and reformatting information into something usable.

Within this context, ARC-PA Standard C1.01 becomes the operational center of PA program accreditation. It requires programs to move beyond assembling individual data points and instead demonstrate how multiple measures are analyzed in relation to one another.

This includes establishing clear benchmarks that define what acceptable performance looks like and using those benchmarks to determine whether outcomes meet expectations, fall short, or reflect meaningful improvement over time. It also requires triangulating data, bringing together multiple sources of evidence to evaluate the same question from different perspectives. This helps ensure that conclusions are not based on a single data point but are supported by consistent patterns across the program.

More importantly, programs must show how those analyses lead to decisions and measurable program improvement.

This is where many PA programs struggle most. The expectation is not simply to present data, but to demonstrate a clear line from

data → analysis → decision → outcome

In practice, that is difficult to sustain.

- Data may be available but not aligned across sources.

- Analyses may be performed but not consistently documented.

- Decisions may be made, but not explicitly tied back to evaluation findings.

And over time, even well-intentioned processes can become fragmented without a structure that supports continuity, transparency, and faculty engagement.

The challenge, then, is not producing evidence at the time of review. It is having a learning analytics system for PA programs that consistently transforms dispersed data into integrated insight, without overextending faculty capacity. In the current environment, the limiting factor is rarely data availability, but rather the time and structure required to make that data meaningful and usable for program evaluation.

A more structured approach to C1.01 reporting and program evaluation

To meet the expectations of ARC-PA accreditation, many programs are moving toward more integrated approaches supported by learning analytics for PA education.

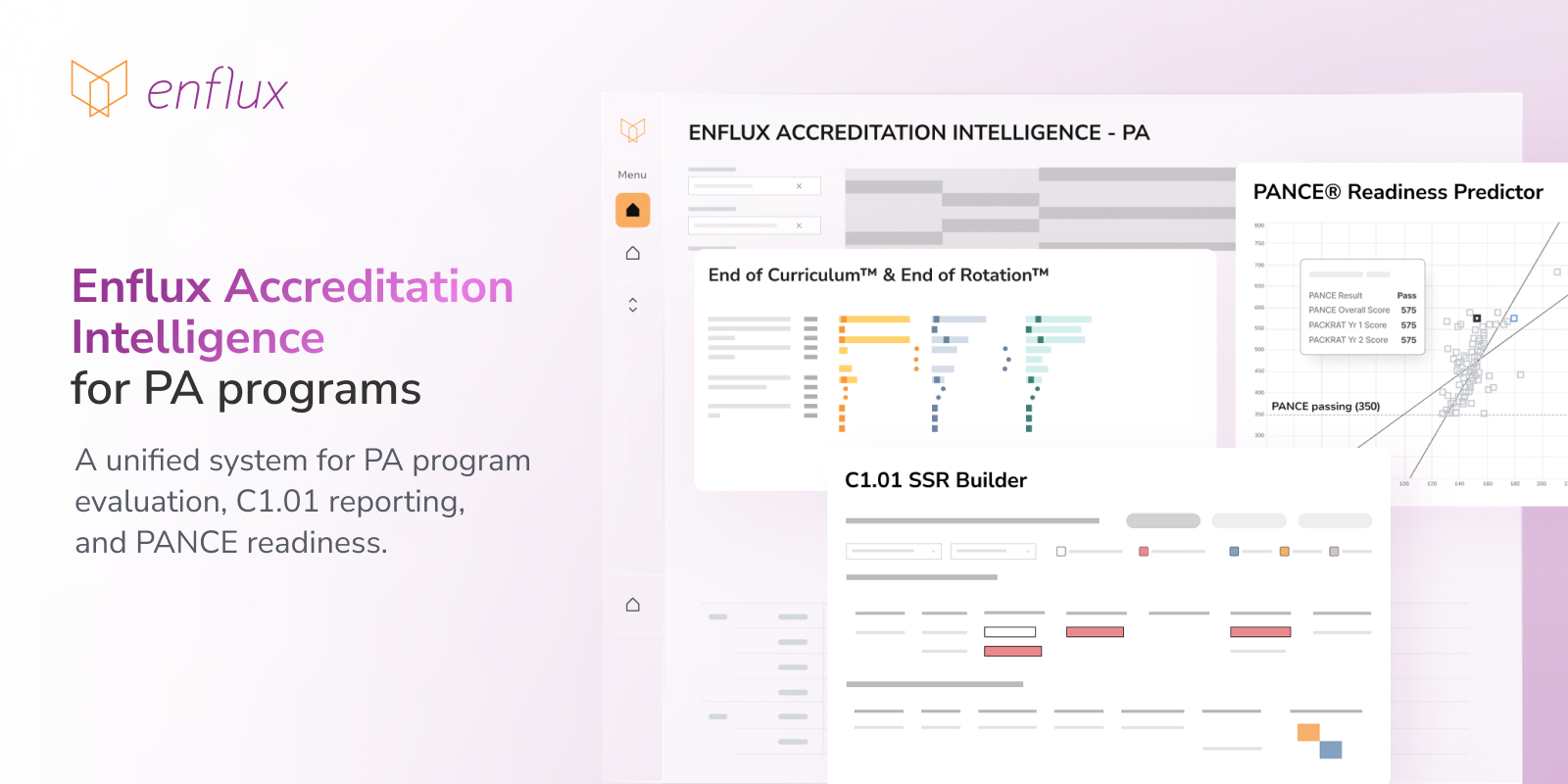

Enflux Accreditation Intelligence for PA programs reflects this shift by providing a unified analytics environment designed to support both C1.01 reporting and broader program evaluation.

Within this environment, two core capabilities support the ongoing self-assessment process:

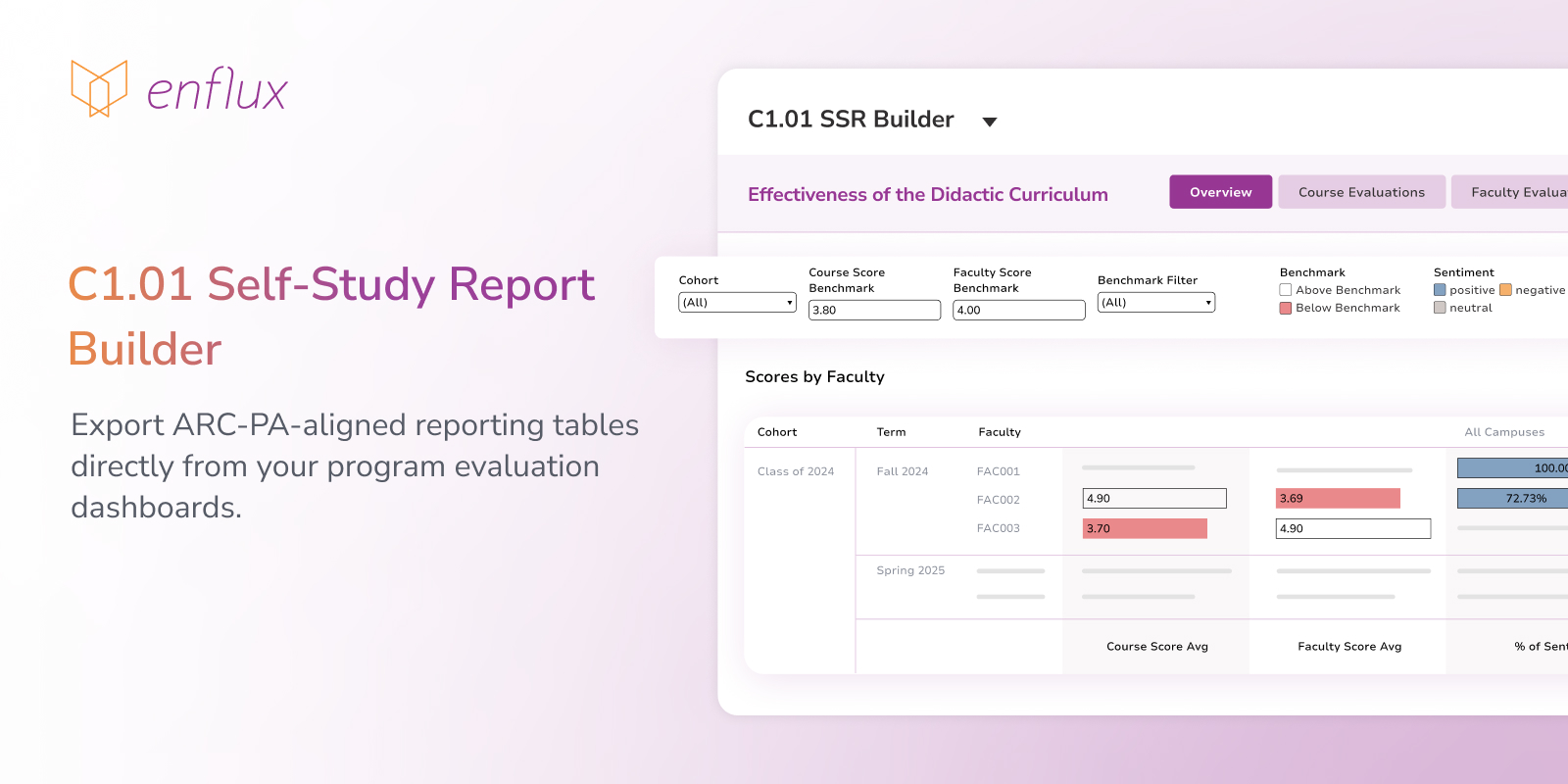

C1.01 SSR Builder

Supports preparation of the ARC-PA self-study report by organizing ongoing evaluation data into structured, accreditation-aligned reporting outputs.

Programs can generate submission-ready C1.01 tables directly from validated dashboard data, reducing the need for manual aggregation and formatting. In this model, ongoing evaluation becomes directly aligned with accreditation reporting.

PANCE® Readiness Predictor

Supports longitudinal analysis of student and cohort performance by connecting outcomes across EoR®, PACKRAT®, EoC®, and PANCE®.

This enables programs to:

- identify trends across cohorts and content areas

- benchmark performance across standardized assessments

- detect early indicators of student risk

- evaluate the impact of curricular changes on outcomes

Rather than reviewing disconnected reports, programs can analyze performance across the full assessment lifecycle in a single view.

From fragmented data to continuous accreditation readiness

Within Enflux Accreditation Intelligence for PA programs, the C1.01 SSR Builder and PANCE® Readiness Predictor support a more structured and sustainable approach to ongoing self-assessment in education within PA program accreditation.

Instead of reconstructing evidence for accreditation cycles, programs maintain a continuously updated, structured view of curriculum effectiveness, student outcomes, and improvement efforts aligned with ARC PA Standard C1.01.

At a practical level, this means:

- establishing a unified evidence base across the curriculum

- connecting analysis across didactic and clinical phases

- linking evaluation findings to documented action and measurable outcomes

Within Enflux Accreditation Intelligence for PA programs, this process is supported through integrated learning analytics and ActionPlans® Management System, and CompetencyGenie™, which automates assessment tagging and strengthens the consistency of evaluation data. Together, these capabilities help programs maintain alignment between data, decision-making, and continuous improvement.

The result is not just more efficient reporting, but a stronger, more transparent approach to program eavaluation and continuous quality improvement, one that supports continuous readiness for ARC-PA accreditation.

Want to simplify ARC-PA reporting and strengthen your self-assessment system?

Explore Enflux Accreditation Intelligence for PA programs or schedule a demonstration to see how your program can move from fragmented data to continuous accreditation readiness.